At the end of the 1960s, the Advanced Research Projects Agency (ARPA) of the US Department of Defence was the first agency to initiate an interconnected communication project called ARPANET, the forerunner of the Internet. Things have changed a lot since then, as the Internet, with its widespread explosion, has changed the world and our perception of it. The network has expanded to be used daily by at least 5 billion people[1], with almost 2 billion websites[2], reaches every corner of the planet, offers an incalculable number of services and is the repository of an impressive amount of data.

In recent years, it continues to collect, with exponential increases, information concerning every aspect of our daily lives, starting with the traces of our use (personal data, photos, thoughts, purchases, movements, conversations and much more). This information occupies different layers of the network: from the public one, thus accessible to anyone who connects, to the most hidden and secret one, defended by more or less complex and sci-fi barriers.

Although there are organisations – which analyse search engines – that are committed to ensuring a minimum of transparency, the vastness of the content still generates enormous problems, and the truth is now so complex as to be unknowable. This huge amount of information is labelled as ‘unstructured’. If, somehow, methods were to be devised to link the information together, to make it dialogue within a kind of intelligent structure, what would be the result? Power over information and global communication. A terrifying prospect, which so far has been in the dreams of Elon Musk, the South African multi-billionaire who, to this end, bought Twitter. But this alone will not be enough for him.

Recent years have seen the publication of studies designed to intelligently collect information from the net, by developing increasingly sophisticated algorithms. The interpretation of this information would produce real-time scenarios of a predictive nature, relating to the specific dynamics of markets, finance, health, security, and, most interestingly, investigating human behaviour. There are obviously many hypotheses of use: profiling users in the business world of a given geographical area or a given social class; or, in medicine or security, improving prevention in the event of attacks. It is not difficult to imagine the doubts of this branch of science: the boundary of lawfulness in taking personal information and using it is very thin. Especially when one wants to profile billions of individuals.

The climb in the world of artificial intelligence

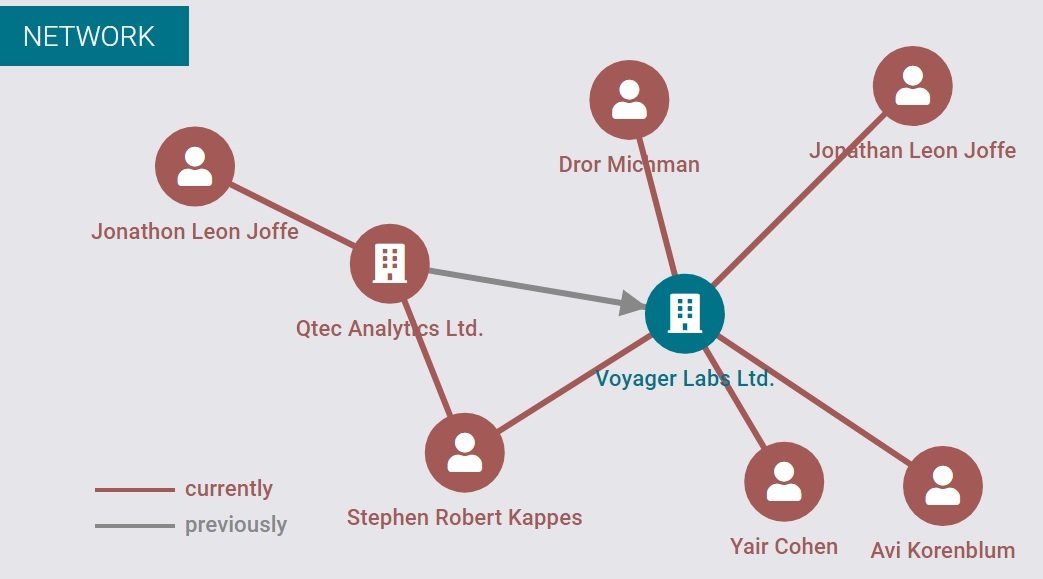

The network of Voyager Labs Ltd[3]

Avi Kornblum, born in Israel in June 1966, after 20 years of service in Israeli intelligence and experience representing the Mossad at IDT Corporation, Verbx Communications and Fusion Telecommunications International[4], founded a small software company in 2010. His specific mission, however, is what will become his fortune: to be able to fish in the magnum sea of the Internet for unstructured information and transform it, on the basis of specific requests, into organised and comprehensible answers. One of his travelling companions is the British Jonathan Leon Joffe[5], former director of QTEC Systems Limited[6], who will be in charge of the start-up company[7], which will be called Voyager Labs Ltd[8].

Not much is known about the first years of activity, the startup is launched in stealth mode, a particular form of incorporation that allows maximum confidentiality for both the activity and the accounts. Voyager Labs is heard from in November 2016, when it comes out of stealth mode[9]. During its incubation period, the small startup raised more than $100 million[10] in funding from backers such as British businessman Ronald Cohen[11], British entrepreneur Lloyd Marshall Dorfman[12], Irish financier OCAPAC Holding[13], its owner Oracle , and Chinese tycoon Li Ka-Shing’s Horizons Ventures[14]. The company has a research and development centre in Tel Aviv and offices in New York, Washington and London, employs around 130 people, most of whom are scientists, data analysts and artificial intelligence experts[15], and has a portfolio of (secret) customers in every sector of global economic life[16].

The company has three departments: Voyager Analytics, Voyager Finance and Voyager eCommerce[17]. All products share the same philosophy: collect as much unstructured information as possible, including social media, and turn it into structured images through processing driven by complex algorithms. According to DoiT International[18], which provides IT support to Voyager Labs (large cloud spaces to securely store their data), the company collects an average of 10 billion pieces of data per day, amounting to 10 TB – an impressive figure[19].

In each of its public presentations, Voyager Labs is at pains to inform that its software only collects public information and therefore in total transparency, but critics say otherwise, as can be seen in the forums[20] of the NDIA National Security (a CIA agency[21]) or in the seminars organised by the ISS World Middle East (an association of cyber-espionage companies[22]), which speak of “usable and previously unreachable information by analysing and understanding huge amounts of open, deep and obscure Web data”[23]. A truly disturbing presentation.

A controversial former CIA agent

The swearing-in ceremony of Leon Panetta as CIA Director, with Stephan Kappes (Voyager Labs), Dannis Blair, Sylvia Panetta and Joe Biden[24]

Success is immediate and it needs political cover. In December 2015, a few weeks before the company’s public unveiling, Stephan Robert Kappes took over[25]: born on 22 August 1951 in Cincinnati, Kappes is married, has two children[26], speaks Farsi and Russian[27] and has a Bachelor’s and Master’s of Science in Pathology from Ohio State University[28]. After graduation, he enlisted in the Marine Corps (1976) and rose rapidly through the ranks until, in 1981, he joined the CIA, where he held numerous positions of responsibility: division chief in New Delhi, Frankfurt, Kuwait City, Moscow and Pakistan, then in the Near East and South Asia until 2000; he returned to America as Deputy Assistant Director for Counterintelligence Operations and then, in 2004, took over from Deputy Director of Operations James Pavitt[29].

In this function he is one of the supervisors, together with Pavitt, of the direction of CIA operations during the compilation of the controversial report on the weapons of mass destruction in Iraq[30]. He resigns after the mess becomes public knowledge, and a few days later, also the Vice-Director, John E. McLaughlin, resigns[31]. In May 2006, Kappes is recalled and nominated Deputy Director of the CIA by John Negroponte[32] – a position he holds until 2009: his objective is to re-launch the image of the CIA after years of scandals[33]. Kappes did not succeed, because he had several bigwigs in the intelligence services against him, who considered him guilty of ‘gross insubordination’ and had him impeached[34].

In 2009, Kappes was convicted in absentia by an Italian court for having directed, in 2003, the kidnapping, extradition and torture of the Egyptian citizen Abu Omar, who was kidnapped in Milan by CIA agents and transferred to Egypt, where he was tortured[35]. His style is already well known: he asked Obama to ‘re-establish secret prisons and use aggressive interrogation methods’[36]. On 14 April 2010, Kappes resigned in a heated atmosphere; an operation he was in charge of turned into a massacre due to ‘carelessness’, i.e. a failure to search: on 30 December 2009, a Jordanian was let into the CIA’s Afghan base wearing an explosive vest. Seven CIA officers died, including the base chief[37], and Kappes was investigated[38].

It is not an honourable exit: for many observers, he is one of the principal architects of the numerous failures of the American Intelligence Agency[39]. In April, 2005, he joins ArmorGroup International as Director and member of the Board of Directors[40]. Subsequently he is appointed Director in Qtec Analytics Ltd (January 2013) and Director of Quest Global Holdings Ltd[41], two cybersecurity companies . In December 2015 he began his venture with Voyager Labs.

Voyager Analytics under indictment

In April 2020, a scandal swept through the Colombian Security Forces: officials spied on the 2016 talks with the Revolutionary Armed Forces of Colombia (FARC) rebels, which ended in a peace agreement. The military improperly collected information on more than 130 people, including journalists from foreign and domestic media, politicians and NGO representatives[42], in an attempt to profile their behaviour[43]. The espionage operation used software from Voyager Labs, purchased a couple of years earlier[44]. On 1 May 2020, the Colombian Ministry of Defence, Carlos Holmes Trujillo, announced the expulsion of 11 officers, while one general voluntarily resigned[45].

In August 2020, Voyager Labs becomes involved in the historic conflict between the Chilean government and the indigenous Mapuche people. In the crosshairs is Operation Hurricane[46], during which, through searches and arrests by the Chilean Carabineros, an attempt is made to intimidate the Mapuche community, fabricating false evidence to blame some families for terrorism[47]. The operation was carried out with the involvement of the Chilean Specialised Operative Intelligence Unit[48], using Voyager Labs software to profile Mapuche leaders[49].

A similar case occurs in Spain: in January 2021, the CTTI Center for Telecommunications and Information Technologies of the Generalitat de Catalunya spends 1.5 million euros to acquire two platforms, Voyager Analytics and Voyager Check, for the official purpose of refining the fight against jihadist terrorism[50]. But the concern that such tools could be used for abusive control of ordinary communities is high[51]: the Mossos d’Esquadra (Catalonia’s police force) are not new to computer hacking and espionage[52]. The list of violations is long: going back in time, the CESICAT Centre for Information Security of Catalonia, no longer operational, ends up under accusation for profiling social activists and journalists, spying on their behaviour: a parliamentary enquiry leads to the request for resignation of the then Minister of the Interior Felip Puig[53].

Dulcis in fundo, comes the Italian criminal investigation: in an investigation by the Prosecutor’s Office of Florence in November 2019, the main suspects are the lawyer Alberto Bianchi and his friend Marco Carrai – the charge is trafficking in influence and illegal financing of parties. At the centre of the scandal is the Open Foundation, created by Bianchi himself to finance the political activities of Matteo Renzi, former Prime Minister, leader and founder of the Italia Viva party. Towards the end of 2021, it is revealed that Marco Carrai allegedly had a meeting in 2016 with Avi Korenblum, founder of Voyager Labs, to buy their software with the aim of “monitoring and influencing the campaign” of the vote on a constitutional referendum that, if approved, would have profoundly changed the Constitution[54]. Renzi lost the referendum, resigned, and his political parabola quickly began to decline.

Is the LAPD violating the First Amendment?

Criminal organisations are increasingly using social networks as a communication vehicle. Voyager Labs offers police analysis tools that promise to foil criminal acts[55]

The use of social media against crime is on the rise: a survey conducted by the International Police Association in 2017 states that 70% of police departments use social media to monitor their citizens[56]. Voyager Labs’ software is also being purchased by the Los Angeles Police Department – a body that has already been using highly contested tools for some time, such as Geofeedia[57], which processes the geographical positioning of individuals by exploiting social media, or Media Sonar[58] and Dunami[59], two pieces of software useful for individual profiling through data collected on the net[60]. The information from these software packages is managed globally by Palantir’s Gotham platform[61], one of the most controversial and powerful tools of police forces around the world[62].

The suspicion that police forces are making unscrupulous use of this software is very high. In January 2020, the Brennan Center for Justice asked the Los Angeles Police Department to report on the methods used to gather information on individuals, groups and activities through the use of social media such as Facebook, Twitter and Instagram[63]. The response arrived on 24 February[64], but was deemed partial and unsatisfactory[65]. On 17 November 2020, the Brennan Center filed a lawsuit in California Superior Court against the police department[66], forcing it to produce additional documentation: between March and September 2021[67], 12 files with a total of about 10,000 pages arrived in response[68].

From the documents – which the Brennan Center will make public in November 2021 – a picture emerges that borders on a violation of the First Amendment: the department confirms that it is making massive use of investigative tools on social networks, intervening undercover using fake profiles, collecting personal information of all kinds, and exploiting predictive software such as Voyager Analitics[69]. It is above all their operativeness that leaves many doubts, both in the methods of collection and in the responses: the information is acquired without leaving a trace, it is used to reconstruct closed social profiles or closed social groups (thus violating the rules of the platforms), to analyse the relationships between the various profiles, to identify the users ‘most involved in a given position: emotionally, ideologically and personally’[70]. All this without any auditing of procedures and personal responsibility for the methods of data collection, use and storage[71].

In a Voyager Labs document[72] describing an operation against Islamic terrorism, it is admitted that its tools can automatically monitor and classify people according to their risk of becoming Islamic fundamentalists. The results are colour-coded (green, orange and red) on the basis of ‘artificial intelligence calibrations’, information obtained within minutes and without human involvement – and without the citizen suspect being able to defend himself[73].

7 July 2016: Thousands of people take to the streets in New York and other American cities to shout ‘Black Lives Matter’ against Geofeedia and the undemocratic use of police surveillance software[74]

A frightening method, if one thinks that, to be identified as a probable Islamic terrorist, it is sufficient to have an unconscious connection on Facebook, perhaps created years before and now forgotten, with someone who, once in his life, typed “Allah is great”. So frightening that even a representative of the LAPD expresses concern about the risk of unmanageability of Voyager’s monitoring mechanisms[75], but also at the memory of the violent protests caused by the use of Geofeedia by law enforcement in 2016[76].

No one can know how many people around the world have been classified as Islamic terrorists. This information is held by Voyager Labs and is not accessible to any legal authority: it is a secret asset with immense destructive power because, if used by the intelligence services, it can take anyone to Guantanamo without any responsibility for anything. This is the Hamletian doubt of the fundamental principle of the democratic concept of justice: the one who is proven guilty of a crime should be punished, and never someone who is suspected of knowing someone who would like to commit it one day.

The Brennan Centre believes that these methodologies can easily produce aberrant results. Arrests based on artificial intelligence findings risk indiscriminately targeting innocent activists and communities of colour[77]. On 7 December 2021, the Brennan Center returned[78] to the task of asking the Office of Intelligence and Analysis of the US Department of Homeland Security and Immigration and Customs Enforcement for information on the use of tools developed by Voyager Labs, ShadowDragon and Logically Inc[79]. The requests, with different reasons, fell on deaf ears[80].

Facebook does not like Voyager Labs

Facebook asks LAPD to suspend practices that violate platform rules[81]

At the beginning of 2018, Zuckerberg found himself dealing with the hurricane that hit his creation, Facebook: the Cambridge Analytica scandal[82]. Having been accused of failing to protect the data of more than 87 million users (data stolen for use in political propaganda), Facebook has developed a strong sensitivity in protecting the personal information of its users, not least because the affair has cost it a good $643,000 in court settlements[83]. One of the first moves was to close the accounts of members of the Cybersecurity for Democracy team[84] in August 2021: the researchers were collecting information from the network to conduct research on Covid19, using a browser extension to circumvent detection systems and collect data such as usernames, ads, and links to user profiles, some of which were not publicly visible. According to Facebook, this collection, as with Cambridge Analytica, violated users’ privacy[85].

In November 2021, the Los Angeles Police Department stated that Facebook did not like Voyager Labs’ software, which was used too casually. According to Facebook, such software violates privacy, but the most important accusation is aimed at police officers who use fake accounts to act undercover, a blatant violation of the platform’s standards – an activity shared by the Californian and Russian police. Facebook (Meta) demands the cessation of these activities[86]. In vain. This is not the first time that Facebook has intervened by ordering the police to suspend investigative activities through the use of fake accounts: it happened already in 2018 with the Memphis police, but in that case Facebook had managed to have the accounts suspended[87].

According to documents published by the Brennan Center, Twitter was also used for the indiscriminate collection of information using a monitoring tool, ABTShield, developed by the Polish EDGE NPD[88]: ABTShield collected so many tweets that it made the software itself go haywire and forced EDGE NPD to stop its activities. A huge volume of data continued to be collected throughout the test period, totalling almost 2 million tweets, an average of about 70,000 per day. According to EDGE NPD, the software collected about 200 million tweets during the trial period, sending only a fraction of them to the LAPD[89]. The rest remains in the company’s databases, and who knows what and to whom they will be used.

The big bluff

Deep learning applied in the detection of violent behaviour through images[90]

“This is hyperbolic artificial intelligence marketing. The more they brag, the less I believe it”: is a statement by Cathy O’Neil, data scientist and algorithmic reviewer – that Voyager Labs’ promises have nothing scientific about them: “They’re telling us, ‘We can see if someone has criminal intent.’ No, you can’t. Not even in the case of people who commit crimes can you predict that they have criminal intentions.” [91]. One example is the use of predictive techniques in Santa Cruz, California, in 2011: after nine years, the city council voted unanimously to ban it, because the method exacerbated racial inequalities[92]. As if there was any need.

The idea that there are predictive risk indicators that can be used successfully is discredited by decades of academic research[93]. There is no evidence that such software has brought benefits in the fight against terrorism and crime, but on the contrary, it has proven to be discriminatory, divisive and destructive to communities. Particularly in the area of Islamic terrorism, where Voyager Labs has worked most intensively, analysis techniques are based on concepts that have been shown to be totally aberrant, such as considering Islamic radicalisation an automatism that leads to terrorism[94].

In 2017, Voyager Labs was named ‘Cool Vendors in AI for Banking and Investment Services 2017’[95] by the American giant Gartner[96]. In November of the same year, it also received the 2017 European New Product Innovation Award[97] from the prestigious consultancy Frost&Sullivan[98]. In September 2020, it wins the 2020 Open Source Technology Innovation of the Year Award[99] from the OSMOSIS Institute, which trains investigators, researchers, journalists and cyber intelligence analysts in Open Source Intelligence techniques and practices[100]. In November 2020, it was awarded ‘Best AI Industry Solution for Intelligence’[101] in the AI Breakthrough Awards 2020, conducted by the leading intelligence organisation of the same name[102].

The accolades are there, but there is a lack of real evidence of effectiveness. Yet there are those who believe in it and are betting heavily on predictive techniques: in November 2021, Voyager Labs arrived in Japan and, in synergy with Terilogy Worx[103], signed an important agreement with a Japanese government agency to supply its platforms for combating terrorism and crime[104]. The signing comes a few months after another important agreement, this time between Voyager Labs and Microsoft, consisting of several platforms that make up the “Microsoft One Commercial Partner” programme for the joint marketing of software with Microsoft’s global sales teams, spread across 170 countries[105].

Voyager Labs therefore continues to find customers. It is only to be hoped that the method matures and becomes effective – and above all that it causes as little collateral damage as possible. When military technology aims not to help but to replace human intelligence, disquiet takes the place of fascination: the risk of making irreparable and inhuman mistakes is dramatically real. Expressing an opinion cannot in itself be considered a crime or a precursor to violence. In the past, fighting for civil rights or for women’s right to vote were considered terrorist expressions. This is the way to repress ideas, not violence.

[1] https://www.statista.com/statistics/617136/digital-population-worldwide/

[2] https://www.internetlivestats.com/total-number-of-websites/

[3] https://www.northdata.com/Voyager+Labs+Ltd.,+London/Companies+House+07154170

[4] https://www.topionetworks.com/people/avi-kornblum-5833be77385326fe88000011

[5] https://www.cbetta.com/director/jonathan-leon-joffe

[6] https://suite.endole.co.uk/insight/people/17092653-mr-jonathan-leon-joffe

[7] https://find-and-update.company-information.service.gov.uk/officers/JGerG860MZIbPkKxIPxeewVDpM4/appointments

[8] https://find-and-update.company-information.service.gov.uk/company/07154170

[9] https://insidebigdata.com/2016/11/02/voyager-labs-emerges-from-stealth-mode-with-next-gen-cognitive-computing-deep-insights-platform/

[10] https://equityzen.com/company/voyageranalytics/

[11] https://sirronaldcohen.org/ ; https://insidebigdata.com/2016/11/02/voyager-labs-emerges-from-stealth-mode-with-next-gen-cognitive-computing-deep-insights-platform/

[12] https://www.forbes.com/profile/lloyd-dorfman/?sh=5d24653b227f ; https://insidebigdata.com/2016/11/02/voyager-labs-emerges-from-stealth-mode-with-next-gen-cognitive-computing-deep-insights-platform/

[13] https://ie.globaldatabase.com/company/ocapac-holding-company-unlimited-company

[14] https://www.haaretz.com/.premium-startup-voyager-labs-goes-public-after4-years-1.5455813

[15] https://www.datanami.com/2017/08/22/guiding-unstructured-data-analytic-journeys/

[16] https://www.haaretz.com/.premium-startup-voyager-labs-goes-public-after4-years-1.5455813

[17] https://www.datanami.com/2017/08/22/guiding-unstructured-data-analytic-journeys/

[18] https://www.doit-intl.com/

[19] https://www.doit-intl.com/clients/voyager-labs/

[20] https://www.issworldtraining.com/iss_mea/

[22] https://www.issworldtraining.com/iss_mea/

[23] https://www.ndia.org/-/media/sites/ndia/meetings-and-events/2021/march/1se1-nsaice/nsaice_program_final.ashx

[24]https://www.zimbio.com/photos/Leon+Panetta/Stephen+Kappes/fO9SgNVhZRY/Ceremonial+Swearing+Leon+Panetta+Held+CIA

[25] https://companycheck.co.uk/company/07154170/VOYAGER-LABS-LIMITED/companies-house-data

[26] https://web.archive.org/web/20070612215306/https:/www.cia.gov/about-cia/leadership/kappes.html

[27] https://www.washingtonpost.com/wp-dyn/content/article/2006/06/18/AR2006061800779.html

[28] https://stringfixer.com/fr/Stephen_R._Kappes

[29] https://hmong.es/wiki/Stephen_Kappes

[30] https://georgewbush-whitehouse.archives.gov/wmd/report.html

[31] https://en-academic.com/dic.nsf/enwiki/926565

[32] https://en-academic.com/dic.nsf/enwiki/926565

[33] https://www.chicagotribune.com/chinews-mtblog-2007-09-cia_official_comes_in_from_the-story.html

[34] https://www.washingtonpost.com/wp-dyn/content/article/2006/06/18/AR2006061800779.html

[35] https://www.usnews.com/opinion/articles/2010/11/11/milans-botched-cia-caper-and-the-war-on-terrorism

[36] https://www.washingtonian.com/2010/03/25/inside-man/

[37] https://www.washingtonian.com/2010/03/25/inside-man/

[38] https://shadowproof.com/2010/04/15/steven-kappes-leaves-the-agency-again/

[39] https://www.washingtonian.com/2010/03/25/inside-man/

[40] https://web.archive.org/web/20070612215306/https:/www.cia.gov/about-cia/leadership/kappes.html

[41] https://www.company-director-search.co.uk/director-stephen-robert-kappes

[42] https://www.elespectador.com/judicial/nuevo-escandalo-en-inteligencia-militar-11-oficiales-y-un-general-salieron-del-ejercito-article-917432/

[43] https://www.reuters.com/article/colombia-hacking-idINKBN22E032

[44] https://www.elespectador.com/judicial/las-carpetas-secretas-de-inteligencia-militar-a-quienes-iban-dirigidas-y-para-que-article-917751/

[45] https://www.elespectador.com/judicial/los-militares-que-salieron-del-ejercito-en-medio-del-nuevo-escandalo-de-chuzadas-article-917639/

[46] https://www.ciperchile.cl/2018/02/14/operacion-huracan-la-trama-que-dinamito-los-puentes-entre-carabineros-y-la-fiscalia-de-temuco/

[47] https://www.ciperchile.cl/2018/02/14/operacion-huracan-la-trama-que-dinamito-los-puentes-entre-carabineros-y-la-fiscalia-de-temuco/

[48] https://www.ciperchile.cl/2018/02/14/operacion-huracan-la-trama-que-dinamito-los-puentes-entre-carabineros-y-la-fiscalia-de-temuco/

[49] https://rebelion.org/softwares-de-vigilancia-isralies-utilizados-para-violaciones-a-dd-hh/

[50] https://directa.cat/els-munoz-grandes-una-nissaga-entre-la-division-azul-i-el-negoci-de-la-ciberseguretat-destat/

[51] https://directa.cat/els-munoz-grandes-una-nissaga-entre-la-division-azul-i-el-negoci-de-la-ciberseguretat-destat/

[52] https://directa.cat/comunicacions-suplantades/

[53] https://directa.cat/comunicacions-suplantades/

[54] https://pledgetimes.com/open-renzi-and-the-israeli-software-to-direct-the-vote-meeting-in-chigi/

[55] https://www.theguardian.com/us-news/2021/nov/17/police-surveillance-technology-voyager

[56] https://chicagojustice.org/2021/10/09/deep-dive-in-to-lapd-social-media-surveillance/

[58] https://www.brennancenter.org/our-work/analysis-opinion/lapd-documents-reveal-use-social-media-monitoring-tools

[59] https://www.dunami.ca/solutions.html

[60] https://www.brennancenter.org/sites/default/files/2020-02/20200130%20LA%20SMM%20CPRA%20Request_0.pdf

[61] https://www.palantir.com/platforms/gotham/

[62] https://www.buzzfeednews.com/article/carolinehaskins1/training-documents-palantir-lapd

[63] https://www.brennancenter.org/sites/default/files/2020-02/20200130%20LA%20SMM%20CPRA%20Request_0.pdf

[64] https://lacity.nextrequest.com/requests/20-719

[65] https://www.brennancenter.org/our-work/research-reports/lapd-social-media-monitoring-documents#j-series

[66] https://www.brennancenter.org/sites/default/files/2021-04/2020-12-08%20First%20Amended%20Complaint.pdf

[67] https://lacity.nextrequest.com/requests/20-719

[68] https://www.brennancenter.org/our-work/research-reports/lapd-social-media-monitoring-documents#j-series

[69] https://www.brennancenter.org/our-work/research-reports/lapd-social-media-monitoring-documents#j-series

[70] https://www.brennancenter.org/our-work/research-reports/lapd-social-media-monitoring-documents#j-series

[71] https://www.brennancenter.org/our-work/research-reports/lapd-social-media-monitoring-documents#j-series

[72] https://www.brennancenter.org/sites/default/files/2021-11/J955-959-%20Corpus%20Christi.pdf

[73] https://www.brennancenter.org/sites/default/files/2021-11/J955-959-%20Corpus%20Christi.pdf

[74] https://www.nbcnews.com/tech/internet/facebook-twitter-instagram-block-geofeedia-tool-used-police-surveillance-n664706

[75] https://www.brennancenter.org/sites/default/files/2021-11/J2712-2713-%20Email%20re%20Trial%20Midpoint.pdf

[76] https://www.theguardian.com/technology/2016/oct/11/aclu-geofeedia-facebook-twitter-instagram-black-lives-matter

[77] https://www.brennancenter.org/our-work/analysis-opinion/lapd-documents-show-what-one-social-media-surveillance-firm-promises

[78] https://www.brennancenter.org/sites/default/files/2022-02/FOIA%20request-%20Social%20Media%20Monitoring%20%2812.07.2021%29.pdf

[79] https://www.brennancenter.org/our-work/research-reports/brennan-center-files-freedom-information-act-requests-information-dhss

[80] https://www.brennancenter.org/our-work/research-reports/brennan-center-files-freedom-information-act-requests-information-dhss

[81] https://screenrant.com/facebook-demands-lapd-stop-using-fake-accounts-surveillance/

[82] CAMBRIDGE ANALYTICA: I CRIMINALI CHE CI CONVINCONO A VOTARE TRUMP | IBI World Italia

[83] https://www.npr.org/2019/10/30/774749376/facebook-pays-643-000-fine-for-role-in-cambridge-analytica-scandal?t=1651421979443

[84] https://cybersecurityfordemocracy.org/

[85] https://www.bbc.com/news/technology-58086628

[86] https://about.fb.com/wp-content/uploads/2021/11/LAPD-Letter.pdf

[87] https://www.eff.org/deeplinks/2018/09/facebook-warns-memphis-police-no-more-fake-bob-smith-accounts

[88] https://www.brennancenter.org/our-work/analysis-opinion/documents-reveal-lapd-collected-millions-tweets-users-nationwide

[89] https://www.brennancenter.org/our-work/analysis-opinion/documents-reveal-lapd-collected-millions-tweets-users-nationwide

[90] https://readyforai.com/article/the-status-and-problems-of-machine-learning-crime-prediction/

[91] https://www.theguardian.com/us-news/2021/nov/17/los-angeles-police-surveillance-social-media-voyager

[92] https://www.eff.org/deeplinks/2020/09/technology-cant-predict-crime-it-can-only-weaponize-proximity-policing

[93] https://www.brennancenter.org/our-work/research-reports/why-countering-violent-extremism-programs-are-bad-policy

[94] https://www.brennancenter.org/sites/default/files/CVE%20Chart%20Terrorism%20Indicators_final.pdf

[95] https://www.prnewswire.com/news-releases/voyager-labs-named-a-2017-cool-vendor-by-gartner-300472386.html

[96] https://www.gartner.com/en/about

[97] https://www.prnewswire.com/in/news-releases/frost–sullivan-recognizes-voyager-labs-for-its-innovative-ai-based-social-behavior-analytics-solution-657441853.html

[99] https://www.prnewswire.com/il/news-releases/voyager-labs-awarded-the-2020-osmosis-open-source-technology-innovation-of-the-year-for-its-innovative-solution-in-applying-ai-to-enable-investigators-and-analysts-to-acquire-previously-unattainable-insights-from-massive-amounts-301145722.html

[100] https://www.osmosiscon.com/about-us/

[101] https://www.prnewswire.com/news-releases/voyager-labs-awarded-best-ai-industry-solution-for-intelligence-for-its-outstanding-work-in-applying-ai-to-accelerating-investigations-mitigating-risk-and-acquiring-actionable-previously-unattainable-insights-301165762.html

[102] https://aibreakthroughawards.com/

[103] https://www.terilogy.com/english

[104] https://look-travels.com/voyager-labs-fait-une-percee-au-japon-avec-une-victoire-strategique-cle-business-news/

[105] https://www.world-today-news.com/voyager-labs-signs-partnership-with-microsoft-to-offer-ai-based-saas-research-platforms-to-strengthen/

Leave a Reply