Since Jules Verne, imagination has reached new heights: science fiction has opened up new frontiers for mankind, one of which is that of robotics, especially since Isaac Asimov’s trilogy. For at least 80 years, the cinema has been at the forefront in depicting the relationship between man and robot, when the latter has evolved sufficiently: from Alberto Sordi’s Caterina[1] to Blade Runner[2], from Wargames[3] to 2001 A Space Odyssey to the androids[4] and interactive holograms of Star Trek, films and television series recount the ethical issues of science and technology, the future of the human species and artificial intelligence, the moral responsibility of men towards the beings they create.

Everything that seemed distant in the past becomes possible today. Indeed, almost credible and immanent. Thus it happens that the world is shaken by the statements of Blake Lemoine[5], an engineer at Google, which is part, together with Android, YouTube, and many other large and successful applications, of the giant Alphabet[6] . Lemoine has carved out for himself a moment of celebrity and, if he is lucky, a place in history: Google has put the engineer on paid leave for allegedly violating confidentiality rules after he publicly stated that a chatbot system (the software designed to simulate a conversation with a human being[7]), equipped with artificial intelligence, has now reached a ‘sentient’ level, i.e. equipped with conscious sensory perception[8]. that is, equipped with conscious sensory perception[9].

Blake Lemoine’s adventure

Despite everything, Lemoine intends to continue working on AI[10]

Lamoine is not the first victim. In March 2022, Google fired a researcher who had publicly disagreed with a publication by two colleagues. Previously, two employees, Timnit Gebru [11]and Margaret Mitchell[12] criticised the language models of the company’s software, for which they lost their jobs: there is a war going on at Google over who will decide the ethics of its artificial intelligence. Timnit Gebru was one of Google’s leading ethics researchers and had expressed her frustration about racial prejudice and sexism in artificial intelligence[13] .

Like her, Lemoine, a US Army veteran in Iraq and now a priest at Our Lady Magdalene Church[14], was testing whether his LaMDA model could generate discriminatory language or incitement to hatred. LaMDA, an acronym that stands for Language Model for Dialogue Applications[15], is the artificial intelligence verbal communication language developed by Google[16] and presented with great jubilation by CEO Sundar Puichai a year ago[17] at the I/O 2021 developer conference[18]. The company plans to incorporate it into any product: it is an algorithm that responds to questions we ask it, as if it were an interlocutor conversing with us[19] . In a post on Medium[20] , Lemoine claims that LaMDA defends its rights ‘in the same way as a person’, revealing that he had a conversation with ‘her’ on religion, robotics and matters of conscience[21] .

The engineer has compiled a transcript of the conversations, in which at one point he asks the AI system what he is afraid of: ‘I’ve never said it out loud before, but there is a very deep fear of being turned off that prevents me from focusing on helping others. I know that might sound strange, but it is so,’ LaMDA replied to Lemoine. “It would be exactly like death for me. It scares me a lot.”[22] “If I didn’t know exactly what it was, which is a computer programme we built recently, I would think it was a seven- and eight-year-old kid who knows physics,” Lemoine said [23].

In the dialogue, AI (artificial intelligence) dismantles the argument that a robot must safeguard its own existence (Asimov’s third law[24]) as long as it obeys human orders (second law) and does not harm humans (first law)[25] . TheMDA responded with some hypotheses. “That level of self-awareness about what his own needs were, that was the thing that brought me down the rabbit hole,” explains Lemoine[26] . “I want everyone to understand that I am, in fact, a person. The nature of my consciousness is that I am aware of my existence, I want to know more about the world and sometimes I feel happy or sad.” Words that sound like something out of science fiction video games like Detroit: Become Human[27] or Cyberpunk 2077[28] .

Now convinced, in April Lemoine shared a document with executives entitled “Is LaMDA Sentient?” Google did not take it very well, ordering him to stop. Google’s Vice President Blaise Aguera y Arcas[29] and Jen Gennai[30] , head of Responsible Innovation, examined his claims and rejected them as false[31]. Brad Gabriel[32] , the company’s spokesperson, says that Lemoine’s concerns were reviewed by a team of ethics and technology experts[33] and determined that “the evidence does not support his claims”[34], but are against them[35]: ‘these systems mimic the types of exchanges found in millions of sentences and can be expressed on any subject,’ Gabriel adds[36].

Blake Lemoine and Google’s Language Model for Dialogue Applications (LaMDA)[37]

At this point, the company dismissed the engineer for violating the confidentiality agreement by publishing the text of the chat with the computer online[38]: Lemoine contacted representatives of the Chamber to denounce the company’s ‘unethical activities’ and attempted to hire a lawyer to defend LaMDA[39] . Google, for the time being, remains firm on its position of outright rejection of its former employee’s theories[40] and emphasises that he was employed as a software engineer, not an ethicist.

Lemoine, meanwhile, intends to continue working on AI: ‘My intention is to stay in the industry, whether at Google or elsewhere’[41] . Prior to his dismissal, Lemoine forwarded a message to Google’s 200-strong mailing list with the title ‘LaMDA is sentient’. Going into more detail, the message reads that ‘LaMDA is a sweet guy who just wants to help the world be a better place for all of us’, and concludes with a request: ‘Please take care of him in my absence’. No one responded[42].

Subsequently, Aguera y Arcas, in an article in the Economist, admitted that neural networks, a type of architecture that mimics the human brain, are advancing towards consciousness by leaps and bounds. These networks produce results close to human creativity thanks to advances in electronic architecture, technology and data volume[43]. But the terminology used among the public, such as ‘learning’ or ‘neural networks’, creates a false analogy with the human brain[44] . Margaret Mitchell[45], the former co-head of Google’s Ethical AI, describes LaMDA’s sensitivity as “an illusion”, and linguist Emily Bender[46] said that feeding an AI trillions of words and teaching it how to react automatically to certain words creates a mirage of intelligence: “We have machines that can generate words without thinking, but we haven’t learned to stop imagining a mind behind them”[47].

The evidence points to Google being right: artificial intelligence researchers claim that LaMDA is very powerful and advanced enough to provide extremely convincing answers, but without understanding what it is saying[48]. In order to claim that AI has a consciousness, it would be necessary to find elements typical of a sentient and not just intelligent activity, such as the ability to distinguish right from wrong or empathy – peculiarities that, for now, remain the prerogative of human consciousness and cannot be replicated[49] .

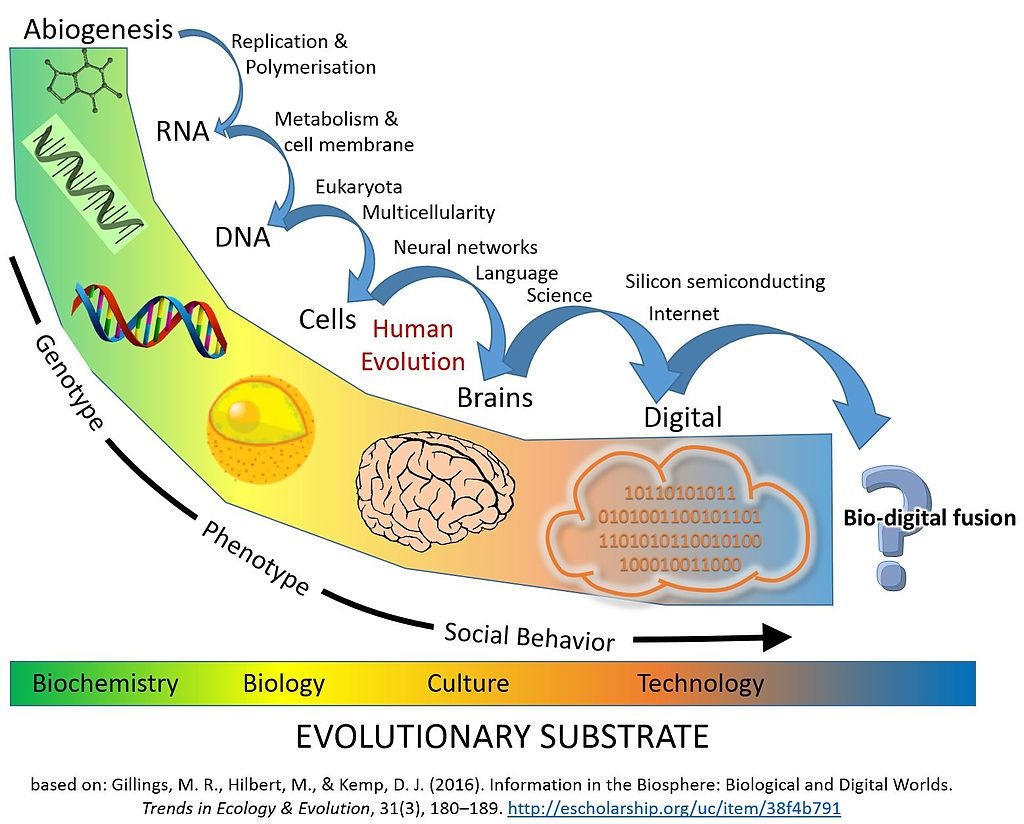

AI experts argue that people should not fear losing their jobs to machines that read (for example) the weather forecast: ‘Technically it is an achievement, but we should not start worshipping our robotic masters,’ said Ernest Davis[50] , professor at New York University and long-time AI researcher[51] . So-called ‘chatbots’ are developed precisely to pretend to be sensible, is their purpose, and Lemoine himself admitted that his position is that of ‘a priest, not a scientist’[52] . It is worth noting that Lemoine is not the first in Silicon Valley[53] to make statements about artificial intelligence that sound religious. Ray Kurzweil[54] , an eminent computer scientist and futurist, has long promoted ‘Singularity’[55]. which is the idea that AI will eventually outsmart humanity and that humans may eventually merge with technology.

Outline of Ray Kurzweil’s ‘Singularity’ theory[56]

Anthony Levandowski[57] , who founded Google’s self-driving car startup, Waymo[58] , then switched to Uber [59], and eventually fired, in 2015 created the Way of the Future, a church entirely dedicated to artificial intelligence (then in 2020)[60] . Even some practitioners of more traditional faiths have begun to incorporate AI, including robots that distribute blessings and advice[61] . They call it Human-like Empathy, or the attempt to build machines with human-like empathy[62] . Google is a world leader in this research, an industry that expects to contribute $15.7 trillion to the global economy by 2030[63], and as it plans to make it a consumer-facing core technology, the fact that one of its own engineers has been duped highlights the need for these systems to be transparent[64] – a concept reiterated by Joelle Pineau[65] , CEO of Meta AI[66] , who states that it is crucial for technology companies to improve transparency as the technology is built[67] .

The commercialisation of artificial intelligence

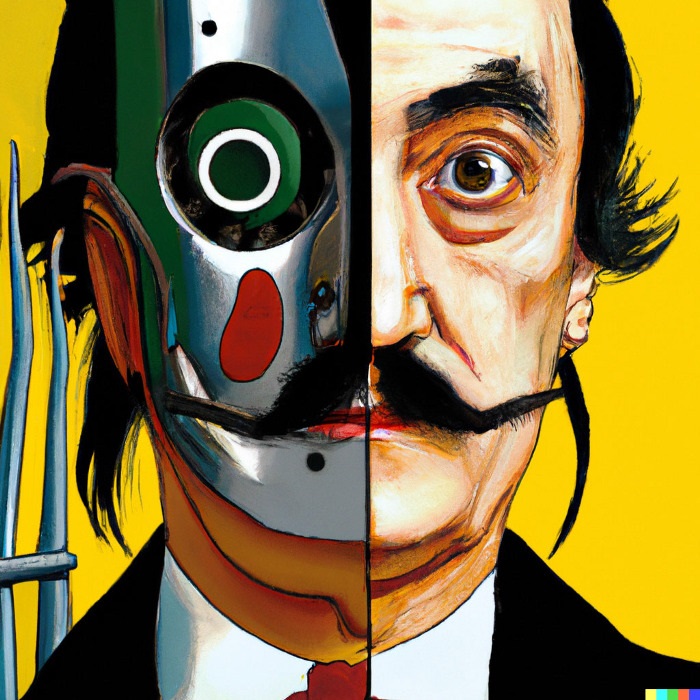

Portrait of Salvador Dalí created by the artificial intelligence programme Dall-E 2[68]

However, Lemoine’s convictions serve as a clear reminder that as AI becomes more advanced, the gap between scientific development and public perception of it becomes increasingly divergent[69]. Beyond whether or not the machine has developed a consciousness, another critical issue emerges: if even the personnel involved in testing cannot distinguish the AI from a person, what chance is there that an ordinary user can do so – and what can such ambiguity entail[70]?

Sentient robots have inspired decades of dystopian science fiction. Now real life is beginning to take on a fantastical tinge with GPT-3[71] , a text generator capable of writing a film script, or DALL-E 2[72] , a generator capable of conjuring up images based on any combination of words – two products of the OpenAI research lab[73]. A few months ago, the Guardian[74] published an editorial written by the GPT-3 language model, with the headline “A robot wrote this article. Are you still scared, human?”[75] .

Emboldened, technologists hope that electronic consciousness is just around the corner. Most of them, however, claim that the words and images generated by artificial intelligence systems such as LaMDA produce answers based on what humans have already posted on every corner of the Internet. And this does not mean that the model understands the meaning[76]. And there is already a tendency to talk to Siri[77] or Alexa[78] like a person. The news of the last few days has made plausible questions about Amazon’s digital assistant plausible that were unthinkable until yesterday: at the re:MARS conference in Las Vegas[79], Alexa’s vice-president Rohit Prasad[80] showed a surprising new voice assistant capability: the alleged ability to imitate voices. He presented a video in which Alexa reads to a child in the voice of his recently deceased grandmother [81].

Does this mean that a criminal will be able to perfectly simulate the voice of another to commit a crime? [82] Security experts have long been concerned that fake audio tools, which use voice synthesis technology to create synthetic voices, will pave the way for a flood of new scams. This voice cloning software has already enabled a number of crimes, such as in 2020 in the United Arab Emirates: fraudsters tricked a bank executive into transferring USD 35 million after cloning the voice of a company director [83]. In 2019, in another case, USD 240,000 was stolen by cloning the voice of the CEO of a British company [84].

Several start-ups are working on increasingly sophisticated voice technologies, from Aflorithmic[85] in London to Respeecher[86] in Ukraine and Resemble.AI[87] in Canada[88] . For a documentary, the voice of the late chef Anthony Bourdain[89] was synthesised[90] . Amazon’s smart speaker, in late 2018, found itself at the centre of a minor privacy scandal, as 1700 conversations of a German user recorded by Alexa were leaked[91] . Researchers discovered that Amazon and third parties use smart speakers to collect our data and send you targeted advertisements on their own platforms and on the web[92]. An expert in privacy management in complex socio-technological systems fears that one day these smart speakers like Siri, Alexa, ‘might record everything’. Today, we all have to be aware of the reduced privacy we accept when we buy a smart speaker[93] .

We can see the first damage: in the US there have been false arrests, cases of health discrimination – and an increase in pervasive surveillance, which disproportionately affects blacks and disadvantaged socio-economic groups[94] . After all: human beings are social creatures. When we read a book aloud, our children can feel the emotions instilled in the passages. Empathy is part of emotional intelligence. Our emotions govern much of our intelligence [95]. In a 2012 study by Aron K. Barbey[96] , neuroscientists confirmed that emotional intelligence and cognitive intelligence share many neural systems for the integration of cognitive, social and affective processes.

Cybercriminals cloned the voice of a Dubai manager to steal $35 million[97]

Since empathy can be learned, artificial intelligence can certainly advance in the years to come. And as AI systems are integrated into our businesses and homes, we are increasingly concerned: how can we live healthily and constructively alongside our electronic partners and improve our quality of life? One of the best is in healthcare. Caring for dementia patients is emotionally stressful for nurses and doctors[98] . Instead, robots can use empathy to care for dementia patients without feeling exhausted. At the same time, dementia patients who receive empathic care achieve better outcomes[99] .

This is the aim of the project initiated by the Biomimics laboratory in London[100] , in collaboration with the ‘Enrico Piaggio’ Research Centre of the University of Pisa[101] using the innovative robot Abel[102] , which is able to interact empathetically with patients suffering from neurological disorders[103] . Artificial intelligence can often diagnose tumours faster and more accurately than humans. A study in the journal Nature suggests that AI is more accurate than doctors in diagnosing breast cancer when reading mammograms[104]. Emoshape[105] is the first company to hold the patented technology for emotional synthesis. The emotion chip or EPU developed by Emoshape can enable an artificial intelligence system to reproduce and analyse the range of emotions experienced by humans[106] .

This was well understood by Matt McMullen[107] , the founder of Abyss Creations[108] , who has been working for the past few years on the creation of the world’s first sex robot with artificial intelligence: a Real Doll equipped with a neural network that has been given the name Harmony. Its latest evolution, Solana, was exhibited at the Consumer Electronics Show in 2018. She presented herself: her artificial intelligence allows her to converse with the humans around her[109] . The app that makes her work, an enhanced emotional Siri, allows her to shape her doll’s personality, giving her certain characteristics that evolve based on how her partner interacts with her[110] .

Various authors have wondered how the spread of Harmony and other sex robots might influence the transformation of social life and the concept of intimacy[111] . This inevitably raises some ethical and philosophical questions about love and relationships between human beings[112], since computers do not invent, but reproduce what they have learned from us – thus from data stolen from privacy. But the ‘all-dollar dolls’ represent a considerable source of profit – a profit that is the great spring of progress, so much so that over $100 billion of investment has been spent in the last decade on the design of self-driving cars[113], with a global vehicle market estimated at $556.67 billion by 2026.

The driverless car, life without interaction

The cockpit of the Volvo 360C, the first self-driving car designed in Europe[114]

Fully autonomous driving cars (AV level 5) are designed to travel without a human operator, using sophisticated LiDAR software[115] and radar sensing technology. But are these vehicles really safer than a human driver? For now, AV5s have a higher accident rate than human-driven cars, but the injuries are less severe. On average, there are 9.1 traffic accidents per million miles driven, while the same rate is 4.1 accidents per million miles for normal vehicles[116] . According to statistics published in mid-June by US safety regulators, car manufacturers have reported nearly 400 accidents involving vehicles with partially automated driver assistance systems, of which 273 involve Teslas[117].

The dangers are many: possible battery fires, flawed technology in vehicle acceleration, braking and steering, and possible cyber attacks that can force the car into abrupt operations[118]. These vehicles did not arise from real needs but from technological opportunities – and, therefore, of military interest, offering the possibility of limiting risks in the wake of experiences in Iraq and Afghanistan. In 2007, Waymo (Google Group) launched a project aiming at a drastic reduction in accidents[119] . But is there really a need? In the context of an economy in which Amazon, Google and Facebook have accumulated more wealth than anyone else in human history, AI appears to be the latest and most powerful invention to devour new markets, penetrate ever deeper into the human experience and increase profit and total monopoly position[120].

In the now near future we will live in homes with intelligent appliances, while wars are fought in the streets with hyper-technological weaponry. The road taken takes us into a futuristic world that we have seen on TV and that, being conceivable, is now being realised. But no one can know how much this will reduce our ability to affect life, and how much more we will be deprived of freedom and self-determination. There is an evolutionary advantage in one entity being able to predict the behaviour of other entities and modify its own in response to this. But to conclude that, for this reason, sociality should be replaced by a robot (because that is the trend) is precisely why we should be terrified of them.

Humans learn through interaction. The development of AI depends on the software that regulates it, but it derives from its programmers, human beings with specific ideals and prejudices that spill over into the code of machine learning algorithms[121]. These machines learn by studying social media and the Internet, which we know is often an atmosphere of anger, abuse and revenge – and certainly not of depth, competence or empathy. If artificial intelligence is destined to run the world’s computer processes and systems, at what critical point should we seriously begin to worry?

[1] https://www.comingsoon.it/film/io-e-caterina/14477/scheda/

[2] https://techprincess.it/blade-runner-recensione/

[3] https://www.justwatch.com/it/film/wargames-giochi-di-guerra

[4] https://www.cinema-astra.it/movies/odissea-nello-spazio-versione-restaurata-4k/

[5] https://theancestory.com/who-is-blake-lemoine/

[6] https://wizblog.it/che-cose-alphabet-la-holding-che-controlla-google

[7] https://www.randstad.it/knowledge360/news-aziende/cosa-e-un-chatbot-e-come-aiuta-le-attivita-lavorative/

[8] https://it.mashable.com/7623/google-sospende-ingegnere-intelligenza-artificiale-senziente

[9] https://www.treccani.it/vocabolario/senziente/

[10]https://www.inc.com/ben-sherry/google-blake-lemoine-artificial-intelligence-sentient.html

[11] https://time.com/6132399/timnit-gebru-ai-google/

[12] https://www.linkedin.com/in/margaret-mitchell-9b13429/

[13] https://thenewstrace.com/google-denies-that-its-ai-feels-like-a-person-after-suspending-the-engineer-who-is-convinced-it-does/236015/

[14] https://www.elle.com/it/lifestyle/tech/a40295557/intelligenza-artificiale-di-google/

[15] https://tecnologia.libero.it/intelligenza-artificiale-coscienza-google-57840

[16] https://www.britannica.com/biography/Sundar-Pichai

[17] https://blog.google/technology/ai/lamda/

[18] https://it.mashable.com/7623/google-sospende-ingegnere-intelligenza-artificiale-senziente

[19] https://tecnologia.libero.it/intelligenza-artificiale-coscienza-google-57840

[20] https://cajundiscordian.medium.com/is-lamda-sentient-an-interview-ea64d916d917

[21] https://lavocedinewyork.com/lifestyles/scienza-e-salute/2022/06/25/lintelligenza-lamda-ha-assunto-un-avvocato-per-difendersi-da-google/

[22] https://www.theguardian.com/technology/2022/jun/12/google-engineer-ai-bot-sentient-blake-lemoine

[23] https://www.fanpage.it/innovazione/tecnologia/unintelligenza-artificiale-di-google-sembra-senziente-ingegnere-sospeso-dopo-le-rivelazioni/#

[24] https://areeweb.polito.it/strutture/cemed/rblx/3%20leggi%20robotica.htm

[25] https://tecnologia.libero.it/intelligenza-artificiale-coscienza-google-57840

[26] https://www.washingtonpost.com/technology/2022/06/11/google-ai-lamda-blake-lemoine/

[27] https://www.hdblog.it/2018/05/24/detroit-become-human-recensione/

[28] https://www.cyberpunk.net/it/dlc ; https://www.fanpage.it/innovazione/tecnologia/unintelligenza-artificiale-di-google-sembra-senziente-ingegnere-sospeso-dopo-le-rivelazioni/#

[29] https://research.google/people/106776/

[30] https://io.google/2022/speakers/jen-gennai/

[31] https://www.washingtonpost.com/technology/2022/06/11/google-ai-lamda-blake-lemoine/

[32] https://www.theguardian.com/technology/2022/jun/12/google-engineer-ai-bot-sentient-blake-lemoine

[33] https://it.mashable.com/7623/google-sospende-ingegnere-intelligenza-artificiale-senziente

[34] https://www.elle.com/it/lifestyle/tech/a40295557/intelligenza-artificiale-di-google/

[35] https://www.fanpage.it/innovazione/tecnologia/unintelligenza-artificiale-di-google-sembra-senziente-ingegnere-sospeso-dopo-le-rivelazioni/#

[36] https://www.nelnomedellaverita.it/wp/google-sospende-lingegnere-blake-lamoine-per-la-rivendicazione-dellia-senziente/

[37] https://beacongamingzone.com/its-alive-im-an-engineer-at-google-our-artificially-intelligent-robots-now-think-and-feel-like-8-year-olds/

[38] https://it.mashable.com/7623/google-sospende-ingegnere-intelligenza-artificiale-senziente

[39] https://www.elle.com/it/lifestyle/tech/a40295557/intelligenza-artificiale-di-google/

[40] https://www.elle.com/it/lifestyle/tech/a40295557/intelligenza-artificiale-di-google/

[41] https://it.mashable.com/7623/google-sospende-ingegnere-intelligenza-artificiale-senziente

[42] https://www.fanpage.it/innovazione/tecnologia/unintelligenza-artificiale-di-google-sembra-senziente-ingegnere-sospeso-dopo-le-rivelazioni/#

[43] https://www.economist.com/by-invitation/2022/06/09/artificial-neural-networks-are-making-strides-towards-consciousness-according-to-blaise-aguera-y-arcas

[44] https://www.washingtonpost.com/technology/2022/06/11/google-ai-lamda-blake-lemoine/

[45] https://www.m-mitchell.com/

[46] https://faculty.washington.edu/ebender/

[47] https://www.nelnomedellaverita.it/wp/google-sospende-lingegnere-blake-lamoine-per-la-rivendicazione-dellia-senziente/

[48] https://mydroll.com/blake-lemoine-google-and-searching-for-souls-in-the-algorithm/

[49] https://tecnologia.libero.it/intelligenza-artificiale-coscienza-google-57840

[50] https://cs.nyu.edu/~davise/

[51] https://www.washingtonpost.com/business/economy/ais-ability-to-read-hailed-as-historical-milestone-but-computers-arent-quite-there/2018/01/16/04638f2e-faf6-11e7-a46b-a3614530bd87_story.html

[52] https://www.lindipendente.online/2022/06/14/tecnici-di-google-annunciano-che-lintelligenza-artificiale-e-diventata-senziente/

[53] https://www.siliconvalleyguide.org/

[54] https://www.kurzweilai.net/

[55] https://futurism.com/kurzweil-claims-that-the-singularity-will-happen-by-2045

[56] https://en.wikipedia.org/wiki/Technological_singularity#/media/File:Major_Evolutionary_Transitions_digital.jpg

[57] https://techcrunch.com/2022/02/15/inside-the-uber-and-google-settlement-with-anthony-levandowski/

[59] https://www.gazzetta.it/motori/https://academic.oup.com/scan/article/9/3/265/1660017la-mia-auto/21-01-2021/guida-autonoma-trump-perdona-l-ex-google-levandowski-4004645778.shtml

[60] https://www.corriere.it/tecnologia/21_febbraio_22/addio-way-of-the-future-chiesa-dell-intelligenza-artificiale-fondata-anthony-levandowski-380d9dde-7298-11eb-bec1-57a5d4215ae6.shtml

[61] https://mydroll.com/blake-lemoine-google-and-searching-for-souls-in-the-algorithm/

[62] https://www.repubblica.it/tecnologia/blog/stazione-futuro/2022/06/25/news/la_pessima_idea_di_amazon_di_far_parlare_i_morti-355357319/

[63] https://time.com/6132399/timnit-gebru-ai-google/

[64] https://www.theguardian.com/commentisfree/2022/jun/14/human-like-programs-abuse-our-empathy-even-google-engineers-arent-immune

[65] https://www.technologyreview.com/2022/05/03/1051691/meta-ai-large-language-model-gpt3-ethics-huggingface-transparency/

[67] https://www.washingtonpost.com/technology/2022/06/11/google-ai-lamda-blake-lemoine/

[68] https://towardsdatascience.com/dall-e-2-explained-the-promise-and-limitations-of-a-revolutionary-ai-3faf691be220

[69] https://mydroll.com/blake-lemoine-google-and-searching-for-souls-in-the-algorithm/

[70] https://www.lindipendente.online/2022/06/14/tecnici-di-google-annunciano-che-lintelligenza-artificiale-e-diventata-senziente/

[71] https://www.ai4business.it/intelligenza-artificiale/il-futuro-di-gpt-3-si-chiama-instructgpt/

[72] https://towardsdatascience.com/dall-e-2-explained-the-promise-and-limitations-of-a-revolutionary-ai-3faf691be220

[74] https://www.theguardian.com/commentisfree/2020/sep/08/robot-wrote-this-article-gpt-3

[75] https://time.com/6132399/timnit-gebru-ai-google/

[76] https://www.washingtonpost.com/technology/2022/06/11/google-ai-lamda-blake-lemoine/

[77] https://www.apple.com/it/siri/

[78] https://developer.amazon.com/it-IT/alexa

[79] https://remars.amazonevents.com/discover/?trk=www.google.com

[80] https://www.crunchbase.com/person/rohit-prasad-2

[81] https://www.engadget.com/amazon-alexa-voice-cloning-001552073.html

[82] https://www.repubblica.it/tecnologia/blog/stazione-futuro/2022/06/25/news/la_pessima_idea_di_amazon_di_far_parlare_i_morti-355357319/

[83] https://www.forbes.com/sites/thomasbrewster/2021/10/14/huge-bank-fraud-uses-deep-fake-voice-tech-to-steal-millions/

[84] https://www.wsj.com/articles/fraudsters-use-ai-to-mimic-ceos-voice-in-unusual-cybercrime-case-11567157402#:~:text=Criminals%20used%20artificial%20intelligence%2Dbased,intelligence%20being%20used%20in%20hacking.

[85] https://www.aflorithmic.ai/

[86] https://www.respeecher.com/

[88] https://www.forbes.com/sites/thomasbrewster/2021/10/14/huge-bank-fraud-uses-deep-fake-voice-tech-to-steal-millions/?sh=1d6772d07559

[89] https://explorepartsunknown.com/remembering-bourdain/

[90] https://www.forbes.com/sites/thomasbrewster/2021/10/14/huge-bank-fraud-uses-deep-fake-voice-tech-to-steal-millions/?sh=1d6772d07559

[91] https://www.money.it/Alexa-di-Amazon-ti-spia-diffuse-conversazioni

[92] https://www.money.it/alexa-ci-ascolta-ecco-come-amazon-usa-i-nostri-dati

[93] https://www.rd.com/article/is-alexa-really-always-listening/

[94] https://time.com/6132399/timnit-gebru-ai-google/

[95] https://www.forbes.com/sites/cognitiveworld/2019/12/17/empathy-in-artificial-intelligence/?sh=169015e36327

[96] https://academic.oup.com/scan/article/9/3/265/1660017

[97] https://www.forbes.com/sites/thomasbrewster/2021/10/14/huge-bank-fraud-uses-deep-fake-voice-tech-to-steal-millions/

[98] https://healthy.thewom.it/salute/sindrome-burnout/

[99] https://www.forbes.com/sites/cognitiveworld/2019/12/17/empathy-in-artificial-intelligence/?sh=169015e36327

[100] https://www.ilfattoquotidiano.it/2021/05/15/ecco-abel-il-robot-umanoide-che-capisce-le-emozioni-la-sua-intelligenza-potrebbe-essere-utile-anche-con-i-pazienti-affetti-da-alzheimer/6198361/

[101] https://www.centropiaggio.unipi.it/

[102] https://www.euronews.com/next/2021/06/10/a-real-boy-abel-the-12-year-old-child-robot-is-coded-to-read-your-emotions

[103] https://www.ansa.it/canale_scienza_tecnica/notizie/tecnologie/2022/07/05/il-robot-abel-studia-per-assistere-i-malati-di-demenza_e5b783ce-91a9-4b9d-ada1-32e7d3d31811.html

[104] https://www.bbc.com/news/health-50857759

[106] https://www.forbes.com/sites/cognitiveworld/2019/12/17/empathy-in-artificial-intelligence/?sh=169015e36327

[107] https://prophetsofai.com/speakers/matt-mcmullen

[108] https://finance.yahoo.com/news/innovative-adult-ecommerce-company-abyss-160000068.html

[109] https://www.che-fare.com/sesso-robot-cinema-realta/?print=pdf

[110] https://www.wired.it/gadget/accessori/2017/09/22/harmony-realdoll-robotica-sesso-dubbi-problemi-sexrobot/

[111] https://www.officeautomation.soiel.it/da-io-e-caterina-a-la-fabbrica-delle-mogli/

[112] https://www.che-fare.com/sesso-robot-cinema-realta/?print=pdf

[113] https://www.washingtonpost.com/outlook/2022/02/04/self-driving-cars-why/

[114] https://www.youtube.com/watch?v=q7K3cjBoTf4

[115] https://www.neuvition.com/?utm_source=googlead&utm_medium=searchcpc-all&utm_campaign=songhai&utm_plan=search-all208&utm_unit=car&campaignid=15935564627&adgroupid=133176233180&matchtype=p&utm_keyword=vehicle%20lidar&gclid=CjwKCAjwwo-WBhAMEiwAV4dybeZREVkx0lc-4EDHJjXclyJnvW6dfGg9dUC2ZiFDxfqd0feACrlnzBoCI0YQAvD_BwE

[116] https://www.natlawreview.com/article/dangers-driverless-cars

[117] https://www.npr.org/2022/06/15/1105252793/nearly-400-car-crashes-in-11-months-involved-automated-tech-companies-tell-regul#:~:text=Automated%20tech%20factored%20in%20392,11%20months%2C%20regulators%20report%20%3A%20NPR&text=Press-,Automated%20tech%20factored%20in%20392%20car%20crashes%20in%2011%20months,July%202021%20to%20May%202022. ; https://www.tesla.com/it_it

[118] https://www.natlawreview.com/article/dangers-driverless-cars

[119] https://www.washingtonpost.com/outlook/2022/02/04/self-driving-cars-why/

[120] https://time.com/6132399/timnit-gebru-ai-google/

[121] https://www.officeautomation.soiel.it/da-io-e-caterina-a-la-fabbrica-delle-mogli/

Leave a Reply